The world of generative AI is about to explode sometime between Dec – Feb.

First let’s understand generative pre-training, more commonly know as OpenAI’s GPT-3. It is the world’s leading generative AI platform. The quality of the text generated by GPT-3 is so high that it can be difficult to determine whether or not it was written by a human.

There are four GPT-3 model versions: Ada, Babbage, Curie, and Davinci. Ada is the smallest and cheapest to use model but performs worst, while Davinci is the largest, most expensive, and best performing of the set…though it is the slowest.

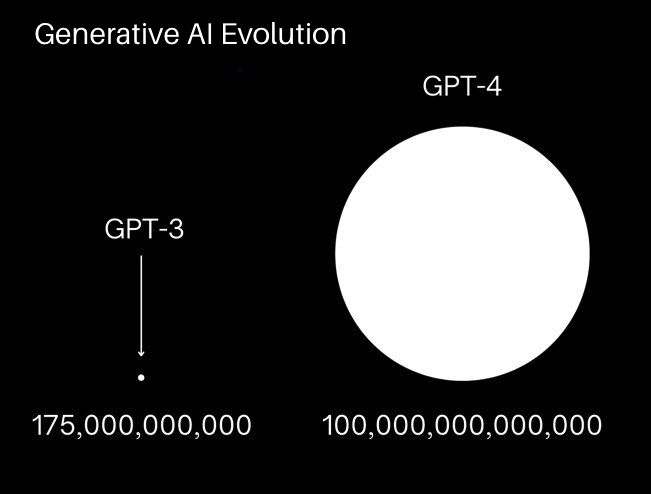

Currently GPT-3 has 175 billion parameters, which is 10x faster than any of its closest competitors. GPT-4 is rumored be about 100 trillion parameters.

So GPT-4 will be 500x more powerful than GPT-3.

What could be the possibilities with OpenAI’s GPT-4?

#ai #generativeai #aitraining #machinelearning #artificialintelligence